Project Gallery

01 Project Overview

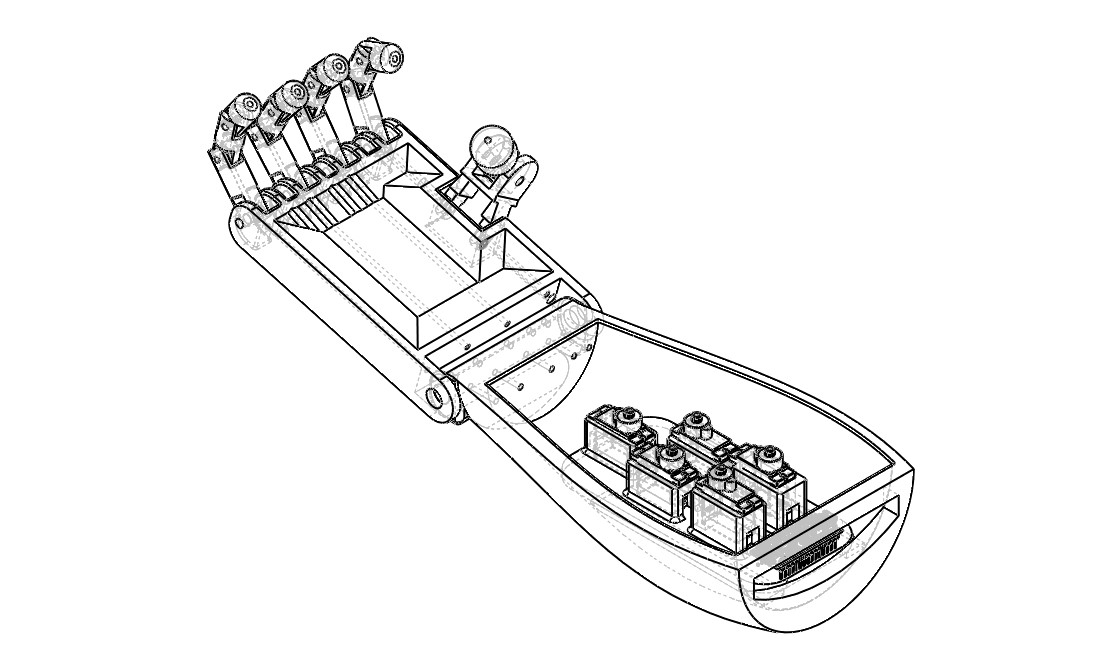

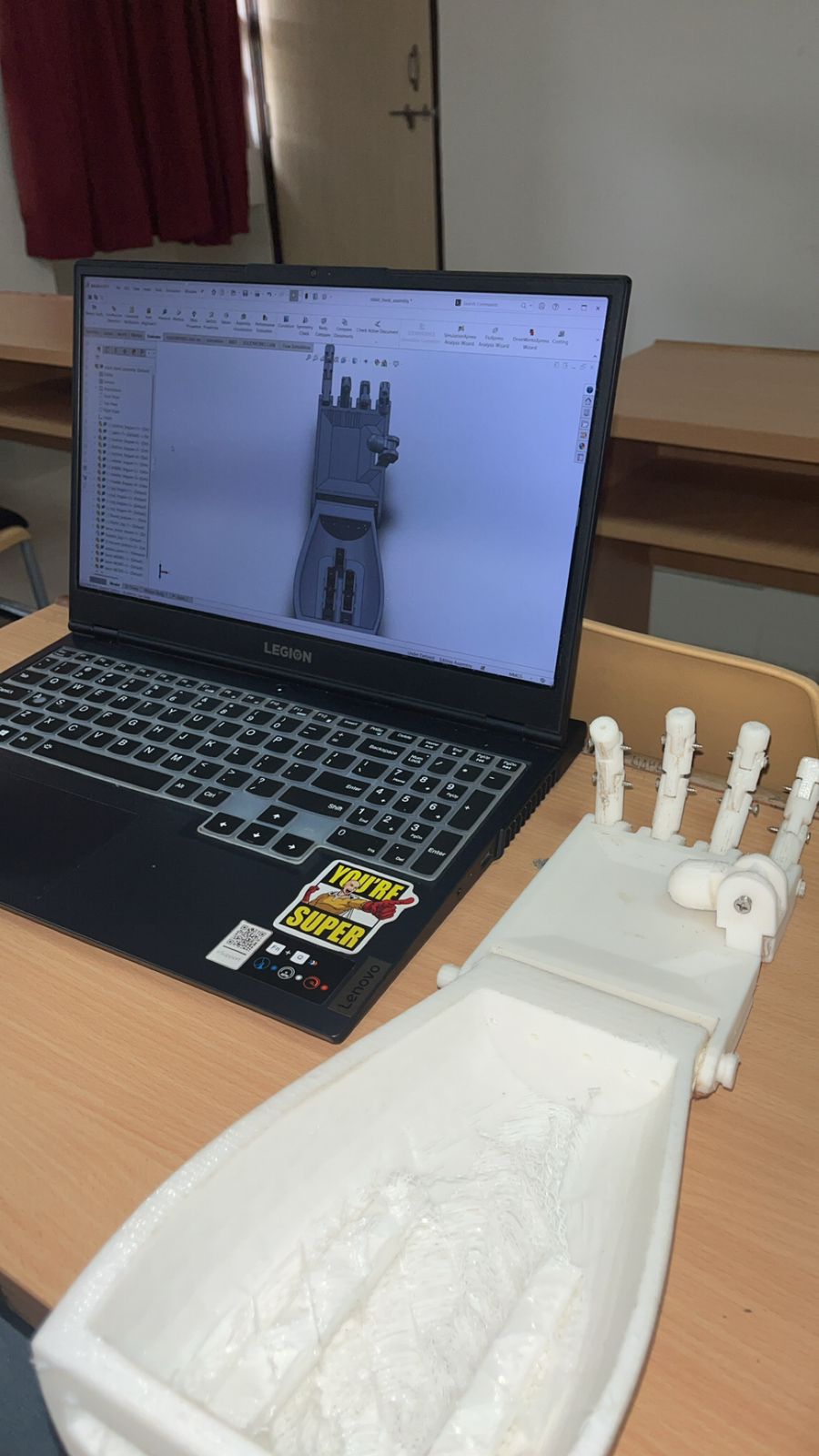

Developed a gesture-controlled anthropomorphic robotic hand that replicates human hand movements using MediaPipe hand tracking, OpenCV computer vision, and servo motor actuation. The system achieves gesture replication with 100-150ms latency, enabling intuitive human-robot interaction for applications in prosthetics and assistive robotics. The hand features 5 fingers with 14 degrees of freedom, fully 3D-printed using PLA filament, with tendon-based actuation that mimics human hand anatomy.

02 Key Features & Achievements

Gesture-controlled anthropomorphic robotic hand with 5 fingers and 14 degrees of freedom

MediaPipe Hands API integration for detecting 21 key landmarks on human hand

Computer vision pipeline with OpenCV for image processing and hand detection

Embedded control system using Arduino Uno to drive 5 servo motors via PWM signals

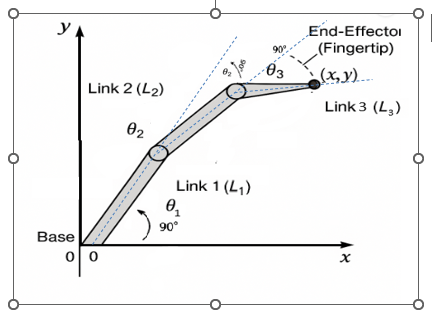

Forward and inverse kinematics analysis for 3-link planar finger manipulators

FEA stress analysis using SolidWorks Simulation to validate structural integrity

Tendon-based actuation system mimicking human hand anatomy

Serial communication protocol for data transfer between Python and Arduino

03 Technical Stack

04 Challenges & Solutions

Challenge 1

Servo Synchronization Problem

Solution

Implemented a coordinated motion algorithm that calculates all servo positions simultaneously and moves them in sync. Created calibration lookup tables for each servo to account for non-linear PWM signal to position mapping and dead zones, ensuring smooth, natural-looking gestures.

Challenge 2

Tendon Routing Nightmare

Solution

Designed and implemented small pulleys and guides to route the five tendons more efficiently through the compact palm. This reduced friction, prevented tangling, and made finger movements smoother through multiple design iterations and careful routing path optimization.

Challenge 3

Processing Latency

Solution

Improved the entire pipeline by reducing unnecessary processing, using efficient serial communication protocols, and profiling code to identify bottlenecks. Achieved 100-150ms total latency (camera capture ~33ms, MediaPipe processing ~10-20ms, serial communication ~5-10ms, servo response ~50-100ms) which is acceptable for most gestures.

Challenge 4

Lighting and Background Sensitivity

Solution

Implemented adaptive thresholding and background subtraction techniques to improve reliability. While the system works best in controlled environments, these preprocessing steps improved hand detection reliability in varying lighting conditions and cluttered backgrounds.

05 Key Achievements

Real-time gesture replication with 100-150ms end-to-end latency (camera capture to servo response)